Workshops and Seminars

Spat Workshop @UC Berkeley

An introduction to the Ircam Spat library, a software suite dedicated to real-time sound spatialization and artificial reverberation. For composers, computer musicians, sound engineers, sound designers, video artists or scientists with interest in spatial sound.

CNMAT main room

1750 Arch Street – Berkeley, CA

May 23-24-25, 2016 – 2pm-6pm

⇒ More info

HCI Meeting Series @UC Berkeley

A series of meetings and activities around human-computer interactions in music

at the Centre for New Music and Audio Technologies (CNMAT) and the Berkeley Institute of Design (BiD),

University of California, Berkeley in May-June, 2016.

A series of meetings and activities around human-computer interactions in music

at the Centre for New Music and Audio Technologies (CNMAT) and the Berkeley Institute of Design (BiD),

University of California, Berkeley in May-June, 2016.

Première journée de rencontres MusICAL

Interaction Calcul Algorithmique Langages appliqués à la Musique

Amiens, 14 décembre 2015

⇒ MusICAL (Réseau National des Systèmes Complexes)

- Présentation: Interactions et modèles d'analyse en CAO: Supervision d'un modèle formel pour la manipulation des structures rythmiques

Jean Bresson, Florent Jacquemart, Adrien Ycart.

Interactivity in Music Composition and Performance

EFFICACe international workshop

May 5th, 2015.

CIRMMT (Centre for Interdisciplinary Research in Music Media and Technology)

Schulich School of Music – McGill University, Montréal (CA)

Interactive Music and Notation: London'14

AFIM work-group "Les nouveaux espaces de la notation musicale".

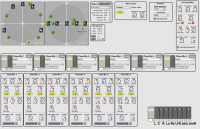

Workshop @NIME'14: Interactive Music Notation and Representation

Goldsmiths University, London, 30/06/2014

The Interactive Music Notation and Representation Workshop co-located with the 14th International Conference on New Interfaces for Musical Expression gathered artists, researchers and application developers to compare views and the needs in the field of music notation and representation inspired by contemporary practicesin interactive and live music, including representational forms emerging from live coding.

⇒ Notation Workshop @NIME

NIME'14, Goldsmiths, University of London

30 June 2014, 9h30-13h

Seminar on Music Notation and Computation @ C4DM

Queen Mary University, London, 30/06/2014

Hosted by the Center for Digital Music at Queen Mary University, this seminar focused on representations and computational approach for generating, optmizing and processing music notation in computer music systems.

⇒ C4DM Notation Seminar

Center For Digital Music, Queen Mary University of London

30 June 2014, 15h-17h30

IRCAM : Groupe de Recherche Rythme/Temps Musical

IRCAM, Paris, 4/11 2014 (16h-18h)

A special session of the IRCAM work group on time and rhythm will be dedicated to presentations of works from the project. A major theme in the project concerns the interactions of computer-aided composition with different kinds of processes with heterogeneous temporalities. Rhythm quatification and the representation of time stuctures linking continuous variations and rhythm are part of the issues addressed in this context.

- John MacCallum : Polytemporal Composition. This presentation will be centered on MacCallum's polytemporal work – compositions in which the musicians play in independent and smoothly-varying tempos - with a focus on the compositional, notational, and performative challenges. [video]

- Florent Jacquemard : Rhythm tree processing for rhythm quantization. We will present some ideas for rhythm quantization based on models and algorithms for tree-structured data processing. This includes k-best parsing procedures for grid selection in on-the-beat quantization, weighted tree automata for the representation of user’s style, and term rewriting system for the production of rhythm notations. [video]

Lisp for music technology - Workshop at the European Lisp Symposium ELS'2014

IRCAM, 6/05/2014

The power and expressivity of Lisp make it a valuable language to musicians for exploring high-level compositional processes, and this language is a fundamental support for computer-aided composition research and creation. In this session we will present an overview of our current projects and developments, and discuss the challenges, issues and perspectives for using Lisp in new music technologies such as digital signal processing and real-time systems.

⇒ 7th European Lisp Symposium

IRCAM, Paris. 5-6 May, 2014

Time, rhythm and arithmetics

MaMuX seminar series, IRCAM, 6/12/2013

In the framework of the Ircam MaMuX seminar series we propose this special session dedicated to formal/mathematical theory of time and rhythm in music composition and performance. Invited speakers: Philippe Riot, Alain Le Méhauté (Federal University of Kazan, Russia), Jean-Louis Giavitto (IRCAM - CNRS), Karim Haddad (Composer, IRCAM).

Invited & Guests

OM-Darwin : composing with genetic algorithms in OpenMusic

Geof Holbrook : Séminaires Recherche et Création, IRCAM

Résidence en recherche musicale IRCAM

22/06 2015 (12h-13h)

OM-Darwin is an OpenMusic library for composing with genetic algorithms. The focus is on flexibility, such that the composer can freely combine patches that generate musical material from a set of parameters, and patches that measure the suitability of potential solutions. On the generative side, the library offers a collection of built-in musical objects, as well as an intuitive system for designing customized constructs. It also implements a rule-based system familiar to users of constraint-solving systems, which can be used in conjunction with user-defined measuring functions. Multiple genetic algorithms can run in parallel inside a maquette, and will interact with each other based on their temporal relationships. This presentation will include a live performance by Anne-Elisabeth DeCologne of excerpts of a new work for contrabass and electronics, with a demonstration of the patches used to create the piece.

Composition in the Flesh - Physical modelling in composition

Anders Vinjar : Séminaires Recherche et Création, IRCAM

Avec la participation du RNSC / Séminaire MaMuX

27/10 2014 (12h-13h)

Physical models used in synthesis and performance (eg. gesture-control) have proved very valuable. Some of the principal benefits - linear control of complex systems, intuitive behavior, easy one-to-many mappings - represent large potentials in composition-applications as well. However, compared to efforts in synthesis and performance little work has gone into testing applications of physical models to composition. Some of the motivations, prerequisites and assumptions for applying physical modelling to composition-tasks are discussed, and some possible gains are suggested. An implementation of a general CAC-environment charged with physical-modelling capabilities is suggested, combining OpenMusic and ODE in a modular way, providing a powerful and flexible environment for working with physical models in composition tasks.

⇒ [video]

A Californian Composer's Dive Into Real-Time Technology and Computer-Aided Composition

Matthew Schumaker / Séminaires Recherche et Création, IRCAM

26/05/2014

Invited talk by Matthew Schumaker, PhD candidate in Music Composition at the University of California, Berkeley.

Matt Schumaker will present his work with live electronics and computer-aided composition in three recent pieces: In threatening possibilities (2012), for two singers, large ensemble and electronics; Nocte Lux (2013), for two cellos, bass and electronics; and a work-in-progress for soprano and orchestra. The first two were undertaken at UC Berkeley's Center for New Music and Audio Technologies (CNMAT) and the third is being created during an ongoing Berkeley Ladd fellowship in Paris. This work engages live-electronics with real-time voice and instrument processing using Ircam objects for granular synthesis and a variety of other signal processing. Live electronics are employed to create a hybrid instrument: a custom-designed, computer-interactive sampler keyboard that performs with continuously changing, physical modelling sound sets created in Modalys. Computer-aided composition tools in OpenMusic are employed to investigate personal ideas of virtual thematism and interpolation between musical lines. Seen as case studies of a Californian composer using computer music tools and programming environments, these works bring up questions concerning the prevalent composer/programmer paradigm. How does a person who is first a composer and only secondarily and of necessity a programmer, engage fruitfully with the vast possibilities and intricacies afforded by such tools? Given the realities of a one-person development team, can a composer's vision sometimes be served by adopting a hybrid approach combining aspects of the ideal flexibility and musicality of the "totally live" real-time approach with substantial "time-deferred," pre-recorded elements? […]

⇒ Séminaires Recherche et Création de l'IRCAM

Salle Stravinsky

26 mai 2014, 12h-13h

Choreography and Composition of Internal Time

Vendredi 12/12/2014

12h00-13h00 -Studio 5, Ircam

Lecture-demonstration: John MacCallum, composition & Teoma Naccarato, chorégraphie

avec Bekah Edie, danse

As a part of the musical research residency at IRCAM for Fall 2014, composer John MacCallum and choreographer Teoma Naccarato are engaged in early research and development for an evening-length production. In this performance with 12 dancers and 12 musicians, ECG data from the dancers is used as an underlying clock for each musician, in order to inform a poly-temporal composition for live ensemble with electronics.

This lecture demonstration will discuss the design of a facile choreographic and compositional environment for real-time interaction with biosensors, as well as questions regarding internal and external perception and interaction. MacCallum and Naccarato will outline ongoing technical and performance-based experiments that integrate biosensors with breath, movement, and environmental stimuli to intervene in and transform in their collaborative creative process.

The session will also feature a short performance study for dancer Bekah Edie with live electronics, which explores correlations between cardiac, respiratory, and nervous activity, in order to impact intentional arcs in heart activity - and therefore musical tempo - over time.

Invited talks & Presentations

IRCAM @ BU CNM April 25-29, 2016

Boston University, USA

- 26/04/2016 – 11:30am:

Computer-Aided Composition using OpenMusic

OpenMusic is a visual programming environment dedicated to musical data processing and generation, used by a large community of users to carry out varied aspects of their compositional processes. We will present the main features and characteristics of this environment, as well as a number applications in contemporary music production.

- 27/04/2016 – 11:00am:

From symbolic music processing to spatial audio – Research directions in computer-aided composition

Computer-aided composition processes traditionally deal with symbolic musical material, manipulated algorithmically and rendered using classical score representations and parameters (pitches, rhythms, etc.) On the other hand, sound processing and spatialization generally run in real-time interactive environments. Research and developments carried out during the past 15 years in computer-aided composition systems have aimed at bridging these different fields. This idea will be illustrated through the presentation of musical research projects carried out in the OpenMusic environment, with a particular focus on recent applications integrating the control of sound spatialization in compositional processes.

CCRMA Guest Colloquium

Center for Computer Research in Music and Acoustics, Stanford University, USA

22/02/2016 – 5:30pm

- J. Bresson, P. Donat-Bouillud: Current Research on Composition Software at Ircam.

This talk will be a presentation of two major projects carried out in the Music Representation research group at IRCAM: OpenMusic and Antescofo. OpenMusic is a visual programming language dedicated to music creation and processing. It allows composers to develop musical processes taking the best advantage of the computational, representational and expressive power of a programming language, using a graphical patching interface and musical editors. Antescofo~ is a modular polyphonic Score Following system as well as a Synchronous Programming language for real-time computer music composition and live performance. The score following module allows for automatic recognition of music score position and tempo from a realtime audio streams, making it possible to synchronize an instrumental performance with computer realized elements. The synchronous language, in conjunction with its dedicated graphical editor AscoGraph, allows flexible writing of interactions. We will briefly present these two compositional software and discuss their most recent features and current related research.

JUCE Summit 2015

London, 19-20 novembre 2015

- Integrating Juce-based GUI in Max/MSP, OpenMusic or other computer music environments.

Thibaut Carpentier

⇒ Résumé

Colloque international "Outils et des méthodes innovantes pour l’enseignement de la musique et du traitement du signal"

Université Jean Monnet, Saint-Etienne, 2-3 Nov. 2015

- Dimitri Bouche: De l’esquisse à la composition

Days of research seminars and workshops in Oslo, Norway

University of Oslo, Department of Musicology

Norwegian Academy of Music

24-26/11 2014

The EFFICACe project was presented along with Ircam's last research and developments on spatial audio at the Department of Musicology of the University of Oslo during a workshop day arganised at the fourmMs laboratory.

Two dans of courses and workshop on OpenMusic were held under the coordination of prof. Asbjørn Schaathun at the Norwegian Academy of Music.

1° Colóquio Franco-Brasileiro de Criação e Análise Musicais com Suporte Computacional

Universities of Campinas and São Paolo, Brasil, 18-29/08, 2014

O Núcleo Interdisciplinar de Comunicação Sonora (NICS), em parceria com a Faculdade de Engenharia Elétrica e Computação (FEEC), o Instituto de Artes (IA) e Centro de Centro de Integração, Documentação e Difusão Cultural (CIDDIC) da Unicamp, junto à USP e à UNESP, com apoio da FAPESP realizam o 1° Colóquio Franco‑Brasileiro de Análise e Criação Musicais com Suporte Computacional.

Os professores das universidades brasileiras acima citadas, junto aos pesquisadores da equipe do RepMus do IRCAM de Paris (Gérard Assayag, Jean Bresson e Moreno Andreatta), promovem uma série de palestras, mesas‑redondas e aulas que abordam o universo da formalização musical e modelos computacionais dirigidos à análise e à criação musicais.

O Núcleo Interdisciplinar de Comunicação Sonora (NICS), em parceria com a Faculdade de Engenharia Elétrica e Computação (FEEC), o Instituto de Artes (IA) e Centro de Centro de Integração, Documentação e Difusão Cultural (CIDDIC) da Unicamp, junto à USP e à UNESP, com apoio da FAPESP realizam o 1° Colóquio Franco‑Brasileiro de Análise e Criação Musicais com Suporte Computacional.

Os professores das universidades brasileiras acima citadas, junto aos pesquisadores da equipe do RepMus do IRCAM de Paris (Gérard Assayag, Jean Bresson e Moreno Andreatta), promovem uma série de palestras, mesas‑redondas e aulas que abordam o universo da formalização musical e modelos computacionais dirigidos à análise e à criação musicais.

- 19/08: J. Bresson - Compositing with sounds / composing sounds

- 20/08: J. Bresson - Models, programs and interactions in OM: Tools for interactive creation and analysis

⇒ French-Brazilian Colloquium on Computer Aided Music Creation and Analysis

Campinas/São Paolo, Brasil

Aug. 18-29th.

PRISMA Meeting

IRCAM, Paris, 4-8/07/2015

- Computer-aided composition tools and interfaces for the control of sound spatialization

Jérémie Garcia, Xavier Favory, Jean Bresson

CCRMA Guest Colloquium Dimitri Bouche : Scheduling & Time Structures in Computer Assisted Composition

CCRMA (Center for Computer Research in Music and Acoustics)

Department of Music, Stanford University.

Wed 02/4, 2015

Music composers’ work with computers is generally divided in the two distinct stages of composition and performance. Each stage requires specific methodology and software: computer-aided composition involves time and high computation capabilities to produce complex musical scores, while performance and live rendering require reactive environments with precise real‐time constraints. Contemporary music composers permanently challenge these kinds of established categories, using unusual objects and behaviors in their music or considering the variations in performance as an actual part of the composition.

Dimitri's work consists in extending the properties of a computer assisted composition software (OpenMusic) to meet these challenges. It mostly means working on defining a scheduling architecture for computer-assisted composition software, but also on providing high level tools for the composers and efficient display of potential complex and non-deterministic data. Even if there are software products that embed interesting scheduling capabilities, no general model has been well established.

After a brief historical survey on the evolution of computer assisted composition and computer music systems (and Lisp-based music systems), Dimitri will present his current work and developments.

Séminaire "Nouveaux espaces de la notation musicale"

INA-GRM - Vendredi 6/02/2015

Groupe de travail de l'AFIM (Association Française d'Informatique Musicale)

Avec Karim Barkati (Weezic), Dominique Fober (Grame), Robert Piéchaud, Filipe Lopes et Pierre Couprie (IReMus).

⇒ Représentations interactives de données musicales dans OpenMusic (J. Bresson).

John MacCallum and Teoma Naccarato: Heart rate data from contemporary dancers

Séminaires Recherche et Création, IRCAM

13/10 2014 (12h-13h)

The composer John MacCallum and choreographer Teoma Naccarato propose a collaborative project that examines the use of real-time, heart rate data from contemporary dancers to drive a polytemporal composition for instrumental ensemble with live electronics.

In collaboration with the Musical Representations Team as part of the EFFICACe Project.

Rhythm Trees at the Vienna Summer of Logic

Vienna, Austria, 13/07/2014

Presentation at the International Federation for Information Processing (IFIP) Working Group 1.6 on Term Rewriting, by Florent Jacquemard.

Rhythm Tree Rewriting (Florent Jacquemard, Jean Bresson and Pierre Donat-Bouillud). In traditional western music notation, the durations of notes are expressed hierarchically by recursive subdivisions. This led naturally to a tree representation of melodies widespread in systems for assistance to music authoring. We will see how term rewriting techniques can be applied to computer music problems, in particular to the problem of rhythm quantization: the transcription of a sequence of dates (real time values) into a music score. Besides the question of rhythm representation, an interesting problem in this context is the design and concise description of large rewrite rule sets and decision problems for these descriptions.

⇒ Vienna Summer of Logic

9-24 July 2014

Séminaire Agorantic: Culture, Patrimoines, Sociétés Numériques

Université d'Avignon, 13/06/2014

Structure Fédérative de Recherche (S)FR Agorantic

Programmation et représentation musicale interactive en composition assistée par ordinateur

J. Bresson (IRCAM - UMR STMS).

L’utilisation de langages de programmation pour la composition favorise une vision duale des structures musicales, où les objets sont considérés comme des processus déployés dans le temps, et où inversement les processus deviennent des représentations puissantes et expressives des objets musicaux. Cette vision « programmatique » de la création musicale n’exclut cependant pas les interfaces et interactions utilisateur (compositeur/programmeur), qui constituent un volet primordial des recherches que nous menons actuellement. Au delà des interactions entre machines et instruments de musique, qui sont de plus en plus fréquentes et riches dans les pratiques musicales contemporaines, il existe également une interaction forte entre le compositeur et les programmes informatiques qu’il produit ou utilise, que ce soit dans la phase de composition, ou même lors de la performance d’une œuvre. Les langages visuels favorisent cette interaction et proposent des représentations mêlant les aspects fonctionnels ou procéduraux, et les interventions « manuelles » sur données mises en jeu dans les processus, accessibles sous des formes familières aux musiciens (partitions, formes d’ondes sonores, courbes de contrôle, etc.) Nous illustrerons ces différents points avec la présentation de l’environnement OpenMusic en essayant de décrire les spécificités de cet environnement du point de vue des paradigmes musicaux et calculatoires sous-tendus par cette notion de composition assistée par ordinateur, ainsi que nos recherches et développements actuels dans le cadre du projet ANR EFFICACe, centrés sur les aspects interactifs mis en jeu en support des processus créatifs, dans les contextes de création, de performance ou d’analyse musicale.

Supports de la présentation [PDF]

⇒ Programme complet du séminaire.

Université d'Avignon

Site Sainte Marthe, Amphi 2E08.

12-13 juin 2014

IRCAM Forum Workshops 2013 - OM 6.7 presentation

20/11/2013

OM 6.7 includes the first prototype of a redesigned scheduling system and audio architecture. It has been ported on Linux thanks to a collaboration with Anders Vinjar and the BEK center (Bergen, Norway) and coupled with several new external audio/MIDI rendering systems such as Jack, FluidSynth, MPlayer… The OM-Faust library (by Dimitri Bouche, developed in the framework of the Inedit project), as well as new releases of the OMPrisma and OM-SoX libraries presented by Marlon Schumacher (CIRMMT / McGill University, Montréal) are compatible with this new version and controlled by this new architecture.

Meeting at UC Berkeley Center for New Music and Audio Technologies (CNMAT)

CNMAT, Berkeley, 11/09/2013

The current and future state of computer-aided composition. In this talk we discussed the fundamentals of computer-aided composition and the computational models present in OpenMusic, that set it apart from reactive, real-time environments such as Max/MSP. We intended to point to some directions for future research in computer-aided composition and to draw concrete objectives for the project.

Co-sponsored event by UC Berkeley Department of Music, the Townsend Center for Humanities, and CNMAT

Conferences

⇒ See alo the publications page.

10ème Colloque sur la Modélisation des Systèmes Réactifs (MSR 2015)

Inria Nancy‐Grand Est, 18-20 novembre 2015

- Articulation dynamique de structures temporelles pour l’informatique musicale

Dimitri Bouche, Jean Bresson

⇒ MSR 2015

inSonic 2015: Aesthetic concepts of spatial audio in sound, music and sound-art

27-28 Nov. 2015 ZKM - HfG, Karlsruhe Germany

Interactive-Algorithmic Control of Sound Spatialization

Jérémie Garcia, Jean Bresson, Thibaut Carpentier, Marlon Schumacher, Xavier Favory

27ème Conférence Francophone sur l’interaction Homme-Machine (IHM 2015)

Toulouse, 27-30 octobre 2015

- Trajectoires : une application mobile pour le contrôle et l’écriture de la spatialisation sonore

Xavier Favory, Jérémie Garcia, Jean Bresson

OpenMusic @ Linux Audio Conference

ZKM – Karlsruhe, 3/05/2014

The Linux version of OpenMusic was developed by Anders Vinjar and the support of the BEK center (Bergen, Norway). It embeds parts of the new scheduling and external audio/MIDI rendering systems. The presentation is included in the "Music Programming" session of LAC'2014 to be held at ZKM in Karlsruhe.

⇒ Linux Audio Conference - LAC'2014

ZKM – Karlsruhe, Germany 1-4 May, 2014

Pedagogical actions

Courses and workshops

CNMAT OpenMusic Workshops (UC Berkeley), 1-15/04/2016

J. Bresson. practical introduction to the OpenMusic computer-aided composition environment.

IRCAM Academie, 20/06/2015

This 3h presentation by J. Bresson and J. Garcia to the Academie attendies was framed in the Composition Workshop of the Manifeste festival 2015. It was dedicated on drawing and gesture input in computer-aided composition and OpenMusic.

IRCAM composition and computer music program, 23/02/2015

This 3h presentation to the IRCAM students of the composition and computer music program was focused on some recent developments and applications of the Efficace project : interaction and reactive processes in OpenMusic (J. Bresson), interfaces for the control of sound spatialization (J. Garcia) and guided improvisation (J. Nika).

ATIAM Master program, Ircam UMPC, 2 and 9/12/2014

These two 3h courses in the Masters program ATIAM (Acoustics, Signal Processing and Computer Science applied to music) were practical introductory sessions to OpenMusic.

CIEE Program, 3-4/07/2014

This 12h course was a general introduction to computer-aided composition and OpenMusic. It was given during IRCAM Manifeste 2014 for the "Summer contemporary music creation + critique" program of the CIEE (Council on International Educational Exchange).

IRCAM professional training, Paris, 10/03/2014

The last level of this series of prefessional training was dedicated to programming libraries and integrating foreign code in teh OpenMusic environment.

ACROE, Grenoble, 31/01/2014

This 3h workshop was an introduction of OpenMusic dedicated to the researchers from the ACROE research center and the AST (Arts, Sciences & Technology) of PHELMA / INP Grenoble.

IRCAM composition and computer music program, 9/12/2013

This presentation at the IRCAM composition and computer music program ("Cursus 1") was focused on the concepts of time and computation in computer music systems and introduced a reactive computation model in OpenMusic. It was completed with a demonstration by Jeremie Garcia on the use of this reactive model in his recent works on paper interfaces for computer music applications.